Solution Brief

High Speed Networking for Digital Media Creation

Table of Contents

– Introduction

– Requirements Overview

– Digital Media Technology Shifts

– High-Speed Networking

– Next-Generation Workstations

– Choice of Production Studios

– EOS: Extensibility

– Arista 7000 Switching Family

– Media Architecture

– Arista Solutions for Media

– Conclusion

Post Production and Centralized Content Management

Developments in VFX, CGI and animation are fulfilling the imagination of creative talent. Coincidentally, the demand for such content is exploding nearly as fast as the number and variety of display appliances used by content viewers. The entire creative, production and distribution networking infrastructure has become a mission critical resource.Accelerating Content Growth Requires

- Increased capacity to handle more visually immersive theatrical workloads and broadcast work streams

- Converged content creation, rights management, transcoding and distribution

- Deliver an open, standards-based transport, supporting economical and commodity based authoring, rendering, transcoding and storage system

Introduction

Media production companies, whether pre- or post- production or real-time content management, are dealing with an explosion of data as they race their production to market. While digital technology and file-based workflows have brought about a revolution in content creation, non-linear editing (NLE) and distribution, they have also introduced new challenges. Most obstacles, such as rapidly provisioning and reallocating technology infrastructure for projects, are related to the dissimilarity of application specific technologies. Similarly these obstacles impact other business policies and processes. Media and entertainment companies realize that to better utilize computing assets, they not only have to consolidate their IT into a common infrastructure and manage storage more effectively; they must also reduce the variety of technologies that are deployed to simplify operational challenges and reduce costs. The foundation of this transformation is a high- speed switched networking infrastructure that allows many different end point systems to be connected reliably, efficiently, and at scale.Requirements Overview

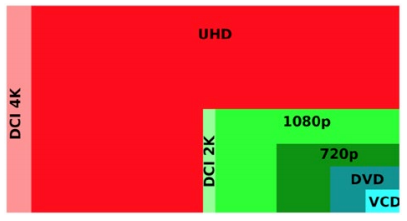

Higher resolution imaging standards evolving from HD to 2K, 4K, and future 6K / 8K resolutions exponentially grow file sizes. 3D technology adds fuel to the fire by effectively doubling storage requirements. Today, raw video capture for a full-length feature film may require between one- half and two petabytes of storage. Even high-resolution digital intermediate imaging storage requirements are growing to hundreds of terabytes.

Figure 1: Comparison of image/pixel size

Other drivers of the media content explosion include today’s audiences, who demand improved graphic imagery and realistic Computer Generated Imagery (CGI). The cost of producing a modern blockbuster has skyrocketed to hundreds of millions of dollars. To help ensure production quality while containing costs, studios are deploying video game and modeling tools that assist in modeling an entire movie before incurring significant production costs. These tools simulate scenes, coordinate storylines and dialogue, check visuals, and model much of the action and integrated animation. These pre-production workloads control costs and verify creative work, while fine-tuning plotlines and action to avoid costly mistakes and budget overruns.

Figure 2: Standardized Editing Stations

This new world of digital production has forever changed the CGI landscape. To produce realistic-looking, high- resolution visual effects (VFX) and animation, modern CGI shops are investing in parallel computing, with hundreds of servers driving tens of thousands of compute cores. These HPC clusters include centralized storage systems and file caching accelerators, all interconnected through high speed, ultra-reliable switching.

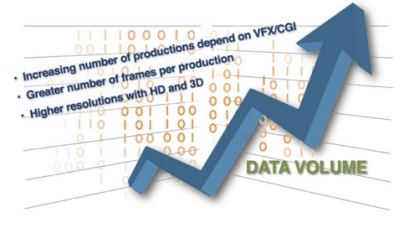

Figure 3:

Similarities between Render Farms and HPC Data Centers are striking

The increasing dependence on the digital production infrastructure is raising the requirements for better reliability and non-stop administration and upkeep. Adding in the bandwidth requirements for I/O heavy file-oriented post- production workflows such as feature-length films or episodic TV, or the low latency requirements of real-time work streams like live, or near real-time edited broadcasts, generates a stringent feature list that can be fulfilled by the most premiere or infrastructure providers. But this new paradigm is creating new opportunities for innovative, specialized teams to be part of an industry that was once closed to everyone but the wealthiest players. The organizations that can innovate using these new digital technologies to produce high quality content in the shortest timeframes are the ones who win and prosper.

While high-performance computing was previously affordable by only elite research and development communities, the cost of this technology has dropped significantly, to the point where high-performance parallel computing infrastructures are cost justified in many applications. These include: automotive safety testing, oil and gas exploration, drug exploration (bioscience research), financial trading and cloud hosting. Media and entertainment companies are also rapidly embracing these technologies.

Digital Media Technology Shifts

Smaller digital studios and post-production facilities leveraging these technologies are competing aggressively with larger organizations. These smaller studios produce HD or higher quality movies, ads, and special effects in many different streaming and playback formats. Remarkably, they deliver these projects with smaller budgets and in less time. Some studios are even outsourcing their workloads to the cloud. These production houses have refined their business models and workflows, understanding the costs/benefits of outsourcing compute in off- peak hours, economic costs for offsite CPU and storage, and the development and administration costs for managing cloud-bursting. These organizations not only leverage high-performance compute and outsourcing, but they’ve also discovered the cost savings of automated administration and management tools that ensure the flawless execution of rendering/CGI workloads.

Figure 4: Digital Media Cloud Hosting

Today, the playing field is leveling due to an evolution away from expensive, proprietary systems, to more open and commodity-based platforms. This migration delivers dramatic price-performance advantages along with remarkable ROIs of operating technology. In the networking technology area, for example, maintaining an isolated Fiber Channel network for storage is unnecessarily costly, when there’s alternative converged Ethernet technology options delivering 10Gb, 25Gb, 40Gb, 50Gb and even 100Gb that is interoperable and available from a variety of vendors. Innovating studios are benefiting from these technologies and are deploying HPC like clusters within their data centers.

Five of the Top Technology Requirements:

- Centralized storage repositories hosting media libraries for content repurposing and more structured management of their assets. The most common storage deployed is file based, using caching or parallelization technologies with multiple 10Gb or 40Gb Ethernet interfaces to support large infrastructure and network fan-in designs.

- Rendering applications for post-production, sophisticated 3D, visual effects (VFX), animation, and finishing. These rendering applications use interconnected servers with high-performance 10Gb or 25Gb Ethernet switching platforms, requiring higher bandwidth 40GbE, 50GbE or 100GbE aggregation interconnects within the data center. The switching infrastructure must comply with data center class requirements for power and cooling efficiency along with cabling and operational automation requirements.

- Fast movement of large volumes of data (master frames) between artist’s workstations and centralized storage or compute. This includes options for very low latency, sub-microsecond, networks or buffered infrastructures with virtually lossless traffic characteristics. These options offer an optimized solution for workflows like remote rendering and Virtual Desktop PC over IP technologies (VDI/PCoIP), or near real-time editing of broadcast work-streams. Either use case requires high-speed Ethernet switching using cost- effective twisted pair cabling and deep packet buffers to handle network speed changes between office workstations and data center server/storage nodes. Typical wiring closet switches are not built for these types of file transfers.

- As subscribers take advantage of content delivery across many device types, studios must deliver concurrent transcoding to multiple formats on the fly. This requires significant parallel server processing like what is available through data center clustering. In some scenarios, the data rate exceeds 10Gbps. Fortunately, with the recent availability of industry standard 25Gb Ethernet, there are more economical options to pre-existing layer two- or three-based load sharing schemes using 10GbE. Scalable networking options help ensure that networking infrastructure can keep pace with evolving formats and help deliver these derivatives in real-time.

- 7x24 uptime for all these use cases including storage, rendering, digital production and transcoding. CGI, rendering and post-production work are now real-time/all the time services. As studios work around the clock to get their final products delivered to many different types of global markets, their IT infrastructure must evolve to meet increasing demands and deliver constant up-time. As a result, studios are redesigning their infrastructure, moving many of their processes into modern data centers and using modern networking architecture. Administrators are leveraging high availability, active/active L2 features as well as L3 load sharing capabilities in the DC network. They are also expanding their management systems by using automation tools to support increasingly large and complex workloads while also minimizing the risks associated with human error. Reliability is greatly improved, while provisioning and reconfiguration are also expedited to improve productivity and reduce turnaround time.

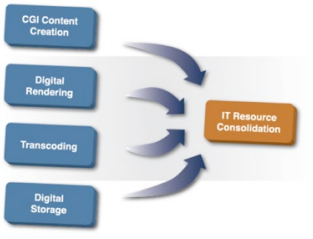

Figure 5: Digital media consolidation drivers

High-Speed Networking for Computer Animation

Images created by movie animators, scene designers, and lighting artists are comprised of large data sets that must be composited and rendered to create a movie. To shave hundreds of hours of post-production time, the data is fed to hundreds of computers running in parallel; all interconnected with high-speed Ethernet through wire speed, non-blocking switches. Non-blocking Ethernet switching has become the de-facto technology of choice.Similarly, images in modern animation are represented digitally via data sets. Like traditional animation, data sets representing animated cells are linked to comprise a full-length digitized movie. Animators, scene designers and lighting artists use computers to create and edit each data set that ultimately yields the visible frame.

The pixel count of a 2K frame is approximately four times the size of an HD video image. The same frame rendered for 3D will have double the pixel density, or eight times the data of a HD frame! Modern digital movies are presented at nearly thirty frames per second, faster than the traditional twenty-four frames/second speed of yesteryear. At four bytes of data per pixel, each frame can grow to hundreds of megabytes in size and the actual movie grows to terabytes for the finished product! The volume and size of animated frames and the increasingly complex rendering algorithms used to create more realistic images drives the need to deploy more compute servers for faster rendering turnaround. These increasingly high fidelity images also drive the need for higher capacity storage as 4K 3D cinema scales to 8K RealD 3DTM imagery. Hundreds of computers presenting tens of thousands of cores are orchestrated in a rendering pipeline to split the image processing process in parallel. This saves days, weeks, and often months of production time.

A full-length feature film with an average length of 90-120 minutes can require multiple petabytes of storage. For this reason, animated movie productions rely heavily on large numbers of multi-core servers, large file-based storage systems, storage performance scaling with active data caching on flash and solid state disks (SSD). It only follows that the network also delivers wire speed high-performance with buffering to manage the instantaneous congestion that occurs as hundreds of compute nodes aggregate their output to storage and caching systems.

The Need for Speed with Next-Generation X86 Based Workstations and Servers

The migration from proprietary CGI workstations to high-performance compute and rendering clusters is already underway. Virtual desktops leveraging PC over IP technologies reduces CAPEX costs per seat, protects intellectual property and offers new flexibility for moving and repurposing equipment and personnel in the studio. Networking infrastructure must be upgraded to accommodate these technologies as well as other innovations, such as remote workstation rendering. Virtually all workstations now come with 1Gbps unshielded twisted pair (UTP) network connections, and servers come standard with 10Gb Ethernet UTP networking. Given these new capabilities, network architects must accommodate this increased capacity with modern, multi-rate switches featuring ample data buffering. If neglected, new applications and platforms can easily overrun legacy network infrastructures, creating I/O bottlenecks that lose data and degrade the productivity of their creative team.Leveraging advancements in chip technologies, Ethernet server and storage networking options have evolved in the last five years. Price/performance has dramatically improved with the advent of 10G Ethernet on UTP for compute and client, as well as the emergence of 25Gb Ethernet for server connections that is comparable in cost with 10GbE Small Form factor Pluggable (SFP+) networking. 10GbE workstation connections are now less expensive than two legacy 1GbE connections, and 25GbE server connections are priced similarly to 10GbE networking. New generation storage platforms also offer 40Gb Ethernet services to support unprecedented IOPS, greatly improving the price performance of storage services. In summary, scaling performance in storage and compute combined with distributed rendering and remote-authoring solutions is driving networking capacity requirements. Therefore, switching platforms offering a range of speeds including 1/10/25GbE and 40GbE connections with optional 100GbE interconnects have become a hard requirement. These offerings have reached industry maturity and deliver backwards compatibility with much better price/performance than legacy 1Gbps networking technologies

Figure 6: A time of exponential growth for digital media

Arista Networks is the Preferred Choice of Production Studios

Arista Networks is the leader in the cloud data center networking market, with hundreds of customers who have deployed Arista data center-class switches for their mission critical applications. Many of these applications require hundreds or thousands of server and compute nodes to: process millions of messaging streams within microseconds; stream hundreds of real-time broadcast, video, and movie streams across the internet; or reliably move large data files between data centers at wire speed. The one thing that all of these applications have in common is the requirement to accelerate time to market for the finished product. This means getting the work done faster, more efficiently, with less administrative overhead, and with no downtime or outage, all while being able to scale as more demands are placed on the network.Arista offers a complete line of wire-speed, low latency, 1/10/25/40 and 100Gb Ethernet switches. This portfolio defines the new standard for data center networking solutions. Arista’s family of fixed and modular chassis switches use the Extensible Operating System (EOS®) that is common to the product family. This operating system is modular, extensible, open and standards-based, supporting fast turn-up and extensibility of sophisticated and customizable switching features for the studio production data center. EOS is uniquely customer centric: administrators can create their own scripts and utilities for specialized network configurations and operations. No other Network Operating System (NOS) has the adaptability and vendor support to make this feasible for users. Customers can model network architectures virtually using a version of EOS that runs as a VM. This is just one example of how Arista redefines the process and tools used to design, deploy and maintain modern media production data centers.

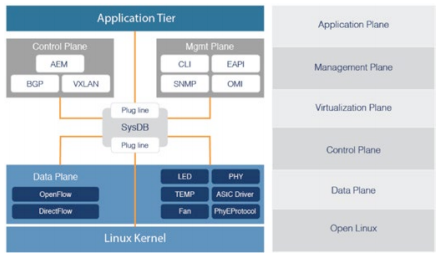

EOS: Extensibility, Automation and Reliability

Arista’s EOS is a fundamentally new architecture for the cloud. Its foundation is a unique multi-process state sharing architecture that separates state information and packet forwarding from protocol processing and application logic. In EOS, system state and data is stored and maintained in a highly efficient, centralized System Database (SysDB). The data stored in SysDB is accessed using an automated publish/ subscribe/notify model. This architecturally distinct design principle supports self-healing resiliency in our software, easier software maintenance and module independence, higher software quality overall, and faster time-to-market for new features that customers require.

Figure 7: EOS Architecture

With Open APIs and developer contributions available in public repositories like GitHub, administrators can create or adapt their own DevOps tools, addressing a wide range of operational needs such as provisioning, change management and monitoring. Administrators can implement solutions based on tools such as Puppet®, Ansible® or Chef®, and can integrate Arista provisioning tools such as ZTP Server.

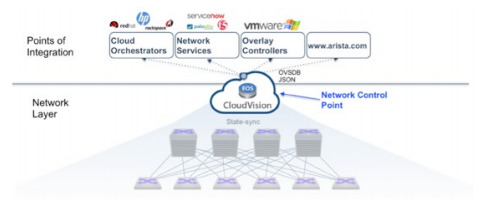

For administrators who have neither the time nor personnel to develop administration systems, Arista has developed the CloudVision® suite of tools for network system administration. Available in an easy to deploy virtualized platform, CloudVision can address audit and compliance requirements, including device inventory, configuration, and image version management. CloudVision provides the means to automate image remediation without service impact by using smart System Upgrade (SSU) and ECMP maintenance mechanisms for faultless, and

Figure 8: EOS CloudVision

Arista 7000 Switching Family: Platform Architecture Built to Serve Any Size Data Center

Arista’s portfolio offers many features that improve the performance of the media post-production pipeline and simplify migration of CGI and computer animation applications to the data center. Depending on the application, administrators can deploy low latency 1GBASE-T switches for broadcast workstreams or deploy deep buffer switches for lossless large-scale workflow transfers. Administrators can utilize installed RJ-45 connected copper cabling for 1Gbps workstation and legacy server connectivity in the data center. In most cases, the same UTP infrastructure can also be used to deploy 10GBASE-T networking on workstations or servers sporting new 10GbE UTP adapters.

Figure 9: EOS CloudVision Graphical Interface

This flexibility saves time and money when retrofitting workstation seats or server racks for next generation production workloads. Similar price performance efficiencies exist for data center architectures that must grow beyond 10Gb Ethernet. The industry standard 25GbE specification uses the same twinax cable and fiber optics used for current 10Gb Ethernet.

Figure 10: Arista Switching Portfolio

Server adapters and switch connections cost the same as 10GbE so managers can now deliver more than double the network bandwidth for the same budget. These switching platforms also provide cost effective 40GbE and 100GbE for high bandwidth applications such as storage and inter switch connectivity. All Arista platforms are purpose built with reliability in mind. All platforms have N+1 redundant field replaceable units, and are designed to run for many years. Last but not least, all Arista platforms run the same EOS binary image, which helps streamline qualification, reliability and maintenance. EOS also contributes to overall system reliability and maintainability by offering cloud proven wire speed layer two Multi-chassis LAG (MLAG) and layer 3 Equal Cost Multipath routing (ECMP) services. This ensures the data center infrastructure can increase throughput and reliability and sustain workloads faultlessly in the rare case of a link or system failure. Arista offers leading packet buffering capabilities to ensure traffic is not lost due to speed changes, congestion or microbursts. Studies demonstrate that buffering ensures traffic reliability, optimized throughput, and provides an invaluable tool for integrating legacy equipment to modern platforms without compromising the performance of either.

Media and Entertainment Architecture

As demand grows, administrators can efficiently scale their high-performance network by leveraging data center leaf-spine architectures: start with a single RU, high density, fixed top-of-rack switch then interconnect racks with an aggregation spine layer of switches. Scale and bandwidth are determined by the speed of the cross connects, the number of distribution layer switches and the data paths between leaf and spine layers. Arista offers a full line of switches that supports this architecture.For larger scale applications, Arista offers the modular 7500E series switching chassis with up to 1152 ports of fully non-blocking 10Gbps Ethernet, 288 ports of 40Gb and 96 ports of 100G Ethernet. The chassis provides 30Tbps of total bandwidth with 3.84 terabits of switching throughput per slot. It uses a Virtual Output Queuing (VOQ) architecture that ensures near 100% fabric efficiency without traffic loss or head of line blocking (HOLB). This critical feature ensures that workflows between hundreds of servers cannot be impacted by isolated network events or congestion to a particular device. Finally the 7500E series modular chassis offers future proofing for customers who will ultimately require higher density 40 and 100Gbps spine topologies.

Arista Solutions for Media and Entertainment

Modular 7500E platform for large-scale digital rendering farms with an ability to deploy a 2-tier network architecture supporting scale-out server racks:- 1152 line rate 1/10G ports at Layer 2 and Layer 3 with load balancing across redundant links

- Virtual Output Queuing and deep buffers for un-congested low latency performance between compute and storage nodes

- N+1 redundancy for all system components

- High density 288 X 40G and 96 X 100G connectivity ready for massive digital content creation and storage consolidation

- Top of rack 7050X switch for high density 10G server and storage rack build outs

- Flexible platform portfolio for both low latency 10G Fiber or low cost 10GBASE-T structured copper cabling

- Non-blocking wire rate L2/L3 performance for meeting time-to-market pressures

- Low power, efficient cooling, and Zero Touch Provisioning for reduced operational costs

- Deep buffer top of rack 7048T or low latency 7010T platforms for connecting 1GbE workstations to large- scale workflows or real-time broadcast work streams.

- Deep switch buffers handling speed transitions from 1GbE to 100GbE

- Common Extensible Operating System (EOS) across all Arista platforms

- Software extensibility and APIs in EOS and CloudVision to deliver tailored monitoring, automated provisioning and change management

- Smart System Upgrade (SSU) and MLAG features to support high availability and maintainability with sub- second infrastructure impact

Conclusion

The technology revolution in media rendering and post-production CGI is opening new possibilities in digital realism, previously unimaginable imagery and cinematic storytelling. Standardization in platforms and tools improves production economics, opening these capabilities to a wider audience. Production pipelines are greatly streamlined by automation and enhancements that are artist and administrator friendly. This streamlining and automation must also apply to the studio production network infrastructure.Arista Networks leads the industry with a software-defined cloud networking approach, including innovative price/performance, scaling, automation and administration. Driving Arista’s best in class switching platforms is its innovative Extensible Operating System (EOS): a Linux based platform that supports automated healing, reconfiguration and extensibility. EOS is consistent across the entire product portfolio ensuring reproducible reliability and scalability in the data center. Leveraging industry standard Dev-Ops tools or Arista’s own CloudVision helps expedite provisioning, changes and upkeep in the growing data center while containing administrative costs. These automation tools also avoid costly errors that may impact productivity. With Arista, media production companies can leverage the same management; performance and scaling efficiencies realized by high-performance computing (HPC) and cloud service providers. Studio production resources will perform better and will be more reliable and cost less, so there’s more time and resources for the studios’ artists, designers, and producers.

| Table 1: Workflow Types and Recommended Platforms | ||

|---|---|---|

Workflow/Workstream |

Requirement |

Solution |

| CGI, VFX, Animation Authoring | 1G UTP connection, handle large traffic flows, nearly lossless | 7048T-A 1G switches with deep buffer VOQ architecture |

| Near real-time broadcast editing or compositing | 1G UTP connection, low latency switching | 7010T 1G switch with less than 3μ sec end-end |

| Compute render platforms low bandwidth | Buffering for speed change and high speed uplinks | 7048T-A 1G switches with deep buffer VOQ architecture |

| Compute render platforms high bandwidth | 10G server connections and high speed upstream connections | 7050T/SX 10G switches with 40/100G uplinks |

| Highest performance render platforms | 10G and 25G server connections with support for future proof scalability | 7060CX and 7260CX Series 10/25/40/50/100G with low latency and wire speed performance |

| Content storage connections | High bandwidth and deep buffering for near lossless fidelity | Arista 7280E or 7500E deep buffer VOQ switches with 40/100G uplinks |

| Content transcoding systems | High bandwidth, low latency | Arista 7050SX or 7150S 10G/40G low latency switches. Arista 7060CX 40/100G low latency switches |

| Data center backbone connectivity | High bandwidth, virtually lossless, high density connectivity, 10-100G options | Arista 7500E modular platforms with deep buffers, VOQ architecture and connectivity scaling to over a thousand 10G ports and nearly 100 X 100G ports |

| Co-Lo Data Center Hosting | Scalable and flexible performance with a variety of interface speeds | Arista 7300X Spline systems for low latency and high performance |

| Virtualization scaling supporting VMware or OpenStack on the same DC infrastructure | Better, more economical scaling, sharing common infrastructure, reliable and self healing | Arista CloudVision VXLAN control services (VCS) supporting VMware, OpenStack and other OVSDB orchestration controllers |

| Infrastructure configuration management automation with self diagnostics and remediation | Automate change management, bug detection and remediation. Maintain code compliance without human intervention or production impact | Arista CloudVision Portal. Provides tools to intake existing configurations, perform sanity checks, validate against master bug reports and recommend and implement remediation automatically |

Copyright © 2017 Arista Networks, Inc. All rights reserved. CloudVision, and EOS are registered trademarks and Arista Networks is a trademark of Arista Networks, Inc. All other company names are trademarks of their respective holders. Information in this document is subject to change without notice. Certain features may not yet be available. Arista Networks, Inc. assumes no responsibility for any errors that may appear in this document. 09-0002-01