データ・ドリブン型クラウド・ネットワーキングでは、単一の一貫性あるソフトウェア・プラットフォーム、Arista EOS®のネットワーク・スタック、ネットワーク・データ・レイク・アーキテクチャ(NetDL™)を中心としてクラウド・ネットワーキングにオープンなアプローチを採用し、人工知能と機械学習(AI/ML)を自動化やセキュリティの課題に適用します。

アリスタのクラウド・ネットワーキングは、クラウド規模のオペレーターの指針となる原則をデータセンター、ハイブリッド・クラウド、キャンパス、広域、低遅延の環境にサービスを提供するクラウド・ネットワーキング・ソリューションのポートフォリオへと拡張しています。これらの原則は、オープンAPIの使用、あらゆるレイヤのプログラマビリティ、クラウド自動化機能、セルフサービスのゼロ・タッチ・プロビジョニング、標準ベースのユニバーサル・クラウド・ネットワーク(UCN)展開アーキテクチャなどから成ります。

クラウド・ネットワーキングのイノベーションは、アリスタのすべてのプラットフォームにスイッチング、ルーティング、状態ストリーミング、テレメトリの機能を提供する先進的な新しいソフトウェア・プラットフォームであるArista Extensible Operating System(EOS®)から始まりました。Arista EOSは、大規模クラウド・オペレーター向けネットワーキングの新たな基準を定め、ネットワークでの汎用シリコン・ハードウェアの幅広い利用に道を開き、サービス・プロバイダー、企業、放送局などに並外れた信頼性を提供しつつ、展開と運用のコストを大幅に低下させました。

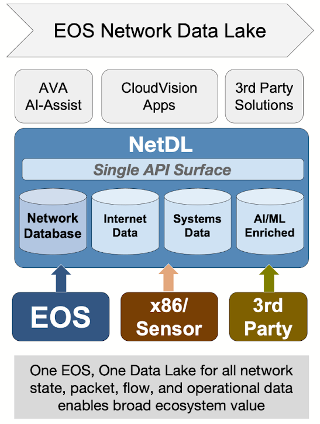

NetDL - EOS Network Data Lake

Arista EOSのネットワーク・スタック・アーキテクチャは、ストリーミングされるデバイス状態、テレメトリ、パケット、フロー、アラート、センサー、およびサードパーティのデータをネットワーク・データ・レイク(Arista EOS NetDL™)に集約し、統合するための基盤となります。Arista EOS NetDLは、AI/ML手法をNetOps環境やSecOps環境に効果的に適用するために必要となる多様なデータセットを統合します。また、NetDLが提供する単一のAPIサーフェスでネットワークとネットワーク関連データにアクセスして、アリスタやサードパーティのアプリケーションを強化できます。

EOS NetDLの特性

- さまざまな形式やコンテキストで大量に公開され、伝送されている、利用可能なあらゆるデータからインテリジェントな決定やインサイトを獲得する上で重要です。

- アリスタの既存EOSの状態共有およびストリーミング・アプローチをもとに新規開発されたNetDLにより、EOSはインテリジェントなデータ・ドリブン型ネットワークや、データを中心とするネットワーク運用プラットフォームの基盤を確立します。

- 最先端のAI/MLドリブンの相関付け手法を使用して、継続的で大規模なデータの収集、前処理分析、価値強化を組み合わせます。

- アルゴリズムによってパターンを発見し、予測分析を適用したり、複雑な問題に処方型ソリューションを提示することができます。

- サードパーティのアプリケーションとツールで構成されるエコシステムが、価値を高めたデータを取り込み、利用できるようにします。

Arista AVA - AIと機械学習

Arista Autonomous Virtual Assist(Arista AVA™)はアリスタのAIテクノロジーです。機械学習や他のAIテクノロジーを利用して、広範な可視化、継続的な脅威検知、適用のあらゆる側面を強化します。AVAは多種多様な運用ユースケースに拡張可能です。例えば、AVAはNDR(Network Detection and Response)、QoE(エクスペリエンス品質)管理、プロアクティブなNetOpsの課題に対処できます。NetDL内にある分散ネットワーク全体の状態およびテレメトリ・データ、分散センサー・ネットワーク、サードパーティのデータ・ソースと組み合わせることで、ネットワーク・デザインの自動化と拡張性をこれまでにない新たなレベルに向上させるとともに、ネットワークの保護とサポートの負担を大幅に削減できます。

CloudVision

CloudVision® はアリスタが提供するネットワーク運用、自動化、可視性のためのターンキー・プラットフォームです。EOS NetDLと同じ状態共有アーキテクチャとAPIを使用して、企業全体で有益な機能を豊富に提供します。CloudVisionは、業界をリードするドメイン固有の機能をデータセンター、有線キャンパスと無線キャンパス、クラウドのネットワークに提供し、従来のサイロ化されたネットワーク管理を打破することによって、企業がネットワーク運用を簡素化できるようにします。

ソリューションのエコシステム

Arista AvaとNetDLは、アリスタの戦略的パートナーとISVで構成されるクラウド・ネットワーキングのエコシステムが、継続的な連携パイプラインを使用した自動化、メディア・エンターテインメント、サイバーセキュリティ、アプリケーションとネットワークのパフォーマンス監視、その他の分野で市場と顧客に特化したインテリジェントなインサイトとソリューションも提供できるようにします。

Equinix、テクノロジー・パートナー・プログラム担当シニア・ディレクター、Matt Leonard氏

Microsoft、セキュリティ、コンプライアンスおよびアイデンティティ担当副社長、Vasu Jakkal氏

Palo Alto Networks、最高製品責任者、Lee Klarich氏

Red Hat、Red Hat Ansible Automation Platform担当副社長、Thomas Anderson氏

Slack Technologies, LLC(Salesforceの子会社)

Splunk、アライアンスおよびチャネル・エコシステム担当副社長、Bill Hustad氏

VMware、上級副社長兼ゼネラル・マネージャー、Umesh Mahajan氏

Zoom、製品およびエンジニアリング担当社長、Velchamy Sankarlingam氏

Zscaler、ビジネスおよび企業開発担当上級副社長、Punit Minocha氏

- .Professional Services Data Sheet

- .Arista Cloud Test Data Sheet

- .Arista CI Pipeline Technical Brief

- .EOS Architecture White Paper

- .Arista Advantage

- .AVA White Paper

- .Arista Q&A Document with Andre Kindness on Data-Driven Networking

- .AVD Deploy Data Sheet

Routing

- .Routing Architecture Transformations and Use cases

- .Solution Brief for IP Peering

- .Spotify’s SDN Internet Router

- .OpenConfig: the emerging industry standard API for network elements

Network Virtualization

- .VXLAN Pseudowires White Paper

- .Arista Networks & Nuage Integration

- .Solving the Virtualization Conundrum White Paper

- .VMware & Arista Network Virtualization Reference Design Guide for VMware vSphere® Environments

- .NSXTM for vSphere with Arista CloudVision® Environments

- .EOS CloudVision® & VMware NSX™ Solution Brief

- .VXLAN: Scaling Data Center Designs

Design Guides

- . Arista Universal Cloud Network Design Guide

- .Big Data Applications Design Guide

- .Data Center Interconnection (DCI) with VXLAN Design Guide

Technology Bulletins

Analyst Reports

Cloud Networking - Media

- .ZKast on CI Pipeline/DevOps with Fred Hsu and Douglas Gourlay of Arista Networks

- .Arista Continuous Integration(CI) Pipeline for Modern Network DevOps

- .Arista Validated Designs(AVD) at US Signal

- .Arista Networks Introducing EOS Network Data Lake (NetDL)

- .Arista Networks NetDL Demo

- .Tale of Opposite Architectures by Ken Duda

- .NetDL press release

- .Jayshree’s Blog: The Data Driven Enterprise

- .Jayshree’s Blog: Arista Evolution to Data-Driven Networking