Virtual Edge Deployment

The Virtual Edge is available as a virtual machine that can be installed on standard hypervisors. This section discusses the prerequisites and the installation procedure for deploying a VeloCloud Virtual Edge on KVM and ESXi hypervisors.

Deployment Prerequisites for Virtual Edge

It discusses the requirements for Virtual Edge deployment as follows.

Virtual Edge Requirements

- Supports 2, 4, 8, and 10 vCPU assignment.

2 vCPU 4v CPU 8 vCPU 10 vCPU Minimum Memory (DRAM) 8 GB 16 GB 32 GB 32 GB Minimum Storage (Virtual Disk) 8 GB 8 GB 16 GB 16 GB - AES-NI CPU capability must pass to the Virtual Edge appliance.

- Up to 8 vNICs (default is GE1 and GE2 LAN ports, and GE3-GE8 WAN ports).

Note: Over-subscription of Virtual Edge resources such as CPU, memory, and storage is not supported.

Recommended Server Specifications

| NIC Chipset | Hardware | Specification |

|---|---|---|

| Intel 82599/82599ES | HP DL380G9 | HP DL 380 Datasheet |

| Intel X710/XL710 | Dell PowerEdge R640 | Dell PowerEdge R640

|

| Intel X710/XL710 | Supermicro SYS-6018U-TRTP+ | Supermicro SYS-6018U-TRTP+

|

Recommended NIC Specifications

| Hardware Manufacturer | Firmware Version | Host Driver for Ubuntu 20.04.6 | Host Driver for Ubuntu 22.04.2 | Host Driver for ESXi 7.0U3 | Host Driver for ESXi 8.0U1a |

|---|---|---|---|---|---|

| Dual Port Intel Corporation Ethernet Controller XL710 for 40GbE QSFP+ | 7.10 | 2.20.12 | 2.20.12 | 1.11.2.5 and 1.11.3.5 | 1.11.2.5 and 1.11.3.5 |

| Dual Port Intel Corporation Ethernet Controller X710 for 10GbE SFP+ | 7.10 | 2.20.12 | 2.20.12 | 1.11.2.5 and 1.11.3.5 | 1.11.2.5 and 1.11.3.5 |

| Quad Port Intel Corporation Ethernet Controller X710 for 10GbE SFP+ | 7.10 | 2.20.12 | 2.20.12 | 1.11.2.5 and 1.11.3.5 | 1.11.2.5 and 1.11.3.5 |

Supported Operating Systems

- Ubuntu Linux Distribution:

- Ubuntu 20.04.6 LTS

- Ubuntu 22.04.2 LTS

- VMware ESXi:

- VMware ESXi 7.0 U3 with vSphere Web Client 7.0.

- VMware ESXi 8.0 U1a with vSphere Web Client 8.0.

Firewall/NAT Requirements

- The Firewall must allow outbound traffic from the Virtual Edge to TCP/443 (for communication with the Orchestrator).

- The Firewall must allow traffic outbound to Internet on ports UDP/2426 (VCMP).

CPU Flags Requirements

For detailed information about CPU flags requirements to deploy Virtual Edge, see Special Considerations for Virtual Edge Deployment.

Special Considerations for Virtual Edge Deployment

- The SD-WAN Edge is a latency-sensitive application. Refer to the Arista Documentation to adjust the Virtual Machine (VM) as a latency-sensitive application.

- Recommended Host settings:

- BIOS settings to achieve highest performance:

- CPUs at 2.0 GHz or higher.

- Enable Intel Virtualization Technology (Intel VT).

- Deactivate Hyper-threading.

- Virtual Edge supports paravirtualized vNIC VMXNET 3 and passthrough vNIC SR-IOV:

- When using VMXNET3, deactivate SR-IOV on host BIOS and ESXi.

- When using SR-IOV, enable SR-IOV on host BIOS and ESXi.

- To enable SR-IOV on KVM and ESXi, see:

- KVM - Install Virtual Edge on KVM

- ESXi - Install Virtual Edge on ESXi

- Deactivate power savings on CPU BIOS for maximum performance.

- Activate CPU turbo.

- CPU must support the AES-NI, SSSE3, SSE4, RDTSC, RDSEED, RDRAND instruction sets.

- Recommend reserving 2 cores for Hypervisor workloads. For example, for a 10-core CPU system, recommend running one 8-core virtual edge or two 4-core virtual edges and reserve 2 cores for Hypervisor processes.

- For a dual socket host system, ensure the Hypervisor assigns network adapters, memory, and CPU resources within the same socket (NUMA) boundary as the vCPUs assigned.

- BIOS settings to achieve highest performance:

- Recommended VM settings:

- CPU should be '100% reserved.'

- CPU shares should be High.

- Memory should be ‘100% reserved.’

- Latency sensitivity should be High.

- The default username for the SD-WAN Edge SSH console is root.

Cloud-init Creation

cloud-init is a Linux package responsible for handling early initialization of instances. If available in the distributions, it allows for configuration of many common parameters of the instance directly after installation. This creates a fully functional instance that is configured based on a series of inputs. The cloud-init config is composed of two main configuration files, the metadata file and the user-data file. The meta-data contains the network configuration for the Edge, and the user-data contains the Edge Software configuration. The cloud-init file provides information that identifies the instance of the Virtual Edge being installed.

The Cloud-init behavior can be configured through the user-data. User-data can be given by the user at the time of launching the instance. This is typically done by attaching a secondary disk in ISO format that cloud-init looks for at first boot time. This disk contains all early configuration data that apply at that time.

The Virtual Edge supports cloud-init and all essential configurations packaged in an ISO image.

Create the Cloud-init Metadata and User-data Files

The final installation configuration options are set with a pair of cloud-init configuration files. The first installation configuration file contains the metadata. Create this file with a text editor and name it meta-data. This file provides information that identifies the instance of the Virtual Edge being installed. The instance-id can be any identifying name, and the local-hostname should be a host name that follows your site standards.

- Create the meta-data file that contains the instance:

name.instance-id: vedge1local-hostname: vedge1

- Add the network-interfaces section, shown below, to specify the WAN configuration. By default, all Edge WAN interfaces are configured for DHCP. Multiple interfaces can be specified.

root@ubuntu# cat meta-data instance-id: Virtual-Edge local-hostname: Virtual-Edge network-interfaces: GE1: mac_address: 52:54:00:79:19:3d GE2: mac_address: 52:54:00:67:a2:53 GE3: type: static ipaddr: 11.32.33.1 mac_address: 52:54:00:e4:a4:3d netmask: 255.255.255.0 gateway: 11.32.33.254 GE4: type: static ipaddr: 11.32.34.1 mac_address: 52:54:00:14:e5:bd netmask: 255.255.255.0 gateway: 11.32.34.254 - Create the user-data file. This file contains three main modules: Orchestrator, Activation Code, and Ignore Certificates Errors.

Module Description vco IP Address/URL of the Orchestrator. activation_code Activation code for the Virtual Edge. The activation code is generated while creating an Edge instance on the Orchestrator. vco_ignore_cert_errors Option to verify or ignore any certificate validity errors. The activation code is generated while creating an Edge instance on the Orchestrator.

Important: There is no default password in Edge image. The password must be provided in cloud-config:#cloud-config password: passw0rd chpasswd: { expire: False } ssh_pwauth: True velocloud: vce: vco: 10.32.0.3 activation_code: F54F-GG4S-XGFI vco_ignore_cert_errors: true

Create the ISO File

genisoimage -output seed.iso -volid cidata -joliet -rock user-data meta-data network-dataIncluding the network-interfaces section is optional. If the section is not present, the DHCP option is used by default.

After the ISO image is generated, transfer the image to a datastore on the host machine.

Install Virtual Edge

You can install Virtual Edge on KVM and ESXi using a cloud-init config file. The cloud-init config contains interface configurations and the activation key of the Edge.

Ensure you created the cloud-init meta-data and user-data files and packaged the files into an ISO image file. For steps, see Cloud-init Creation.

- SR-IOV

- Linux Bridge

- OpenVSwitch Bridge

- On KVM, see Install Virtual Edge on KVM.

- On ESXi, see Install Virtual Edge on ESXi.

Activate SR-IOV on KVM

To enable the SR-IOV mode on KVM, perform the following steps.

- Intel 82599/82599ES

- Intel X710/XL710

Validating SR-IOV (Optional)

lspci | grep -i Ethernet01:10.0 Ethernet controller: Intel Corporation 82599 Ethernet Controller Virtual Function(revInstall Virtual Edge on KVM

Discusses how to install and activate the Virtual Edge on KVM using a cloud-init config file.

If you decide to use SR-IOV mode, enable SR-IOV on KVM. For steps, see Activate SR-IOV on KVM.

To run Virtual Edge on KVM using the libvirt:

The cloud-init already includes the activation key, generated while creating a new Virtual Edge on the Orchestrator. The Virtual Edge is configured with the config settings from the cloud-init file. This configures the interfaces as the Virtual Edge powers up. Once the Virtual Edge is online, it activates with the Orchestrator using the activation key. The Orchestrator IP address and the activation key have been defined in the cloud-init file.

Activate SR-IOV on ESXi

Enabling SR-IOV on ESXi is an optional configuration.

- Intel 82599/82599ES

- Intel X710/XL710

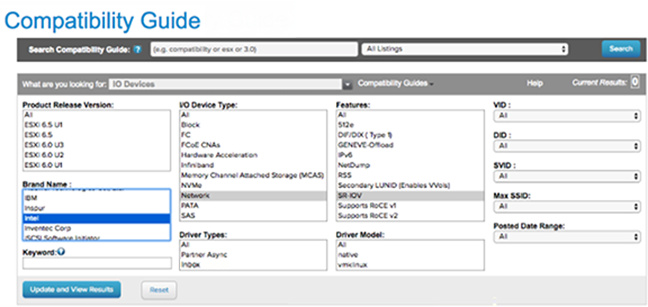

- Make sure that your NIC card supports SR-IOV. Check the Hardware Compatibility List (HCL) at Arista Documentation.

- Brand Name: Intel

- I/O Device Type: Network

- Features: SR-IOV

Figure 1. Compatibility Guide

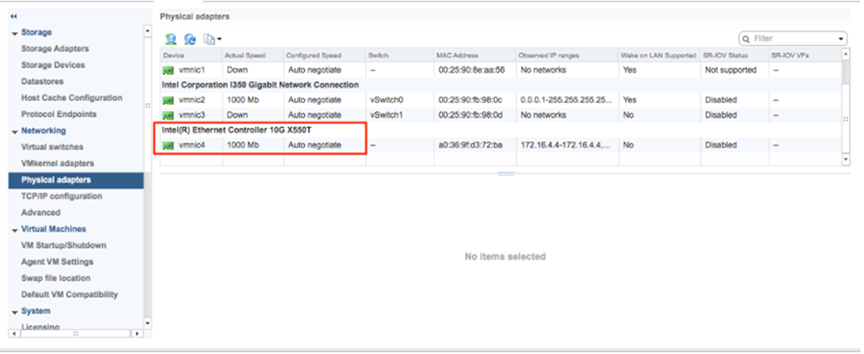

- Once you have a support NIC card, go to the specific Arista host, select the Configure tab, and then choose Physical adapters.

Figure 2. Configure Physical Adapter

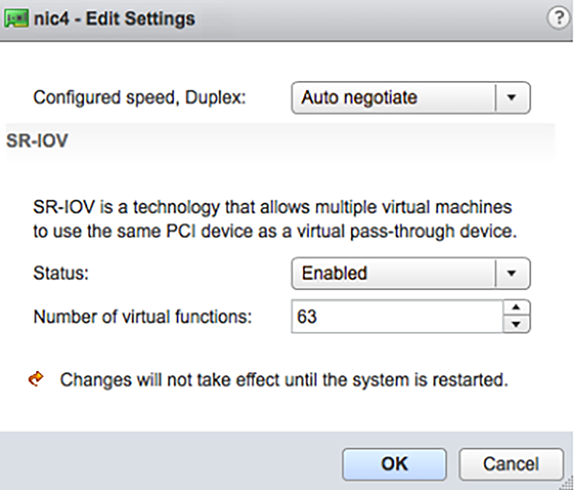

- Select Edit Settings. Change Status to Enabled and specify the number of virtual functions required. This number varies by the type of NIC card.

Figure 3. Edit Settings

- Reboot the hypervisor.

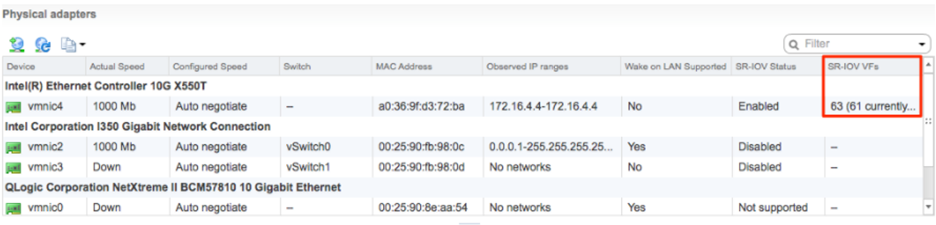

- If SR-IOV is successfully enabled, the number of Virtual Functions (VFs) will show under the particular NIC after ESXi reboots.

Figure 4. Virtual Functions List

Install Virtual Edge on ESXi

Describes how to install Virtual Edge on ESXi.

If you decide to use SR-IOV mode, enable SR-IOV on ESXi. For steps, see Activate SR-IOV on ESXi.

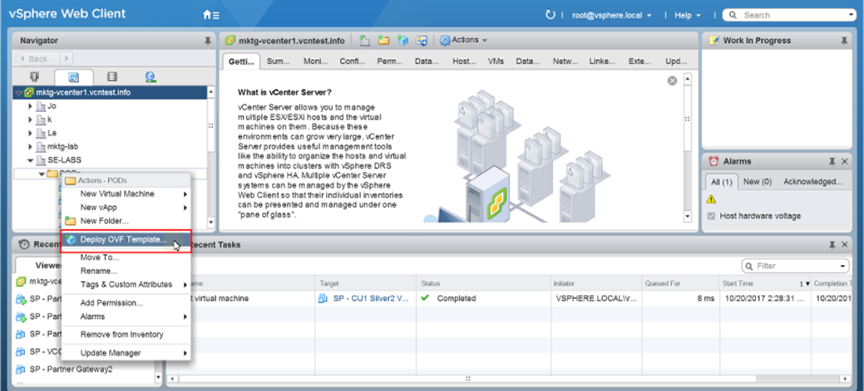

- Use the vSphere client to deploy an OVF template, and then select the Edge OVA file.

Figure 5. vSphere Web Client

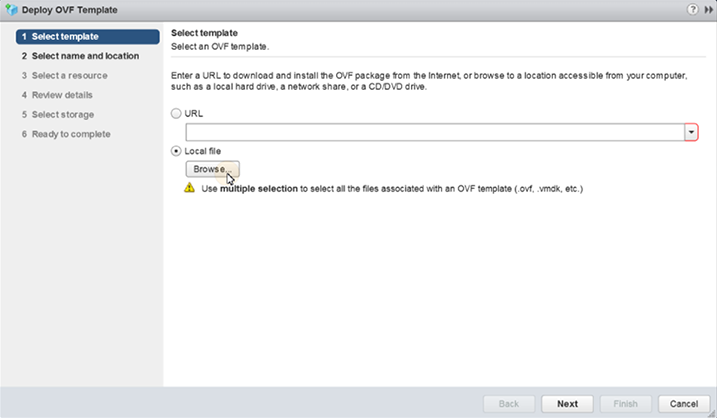

- Select an OVF template from an URL or Local file.

Figure 6. Deploy OVF Template

- Select a name and location of the virtual machine.

- Select a resource.

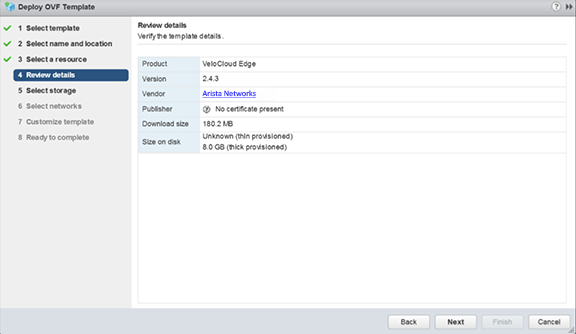

- Verify the template details.

Figure 7. Review Details

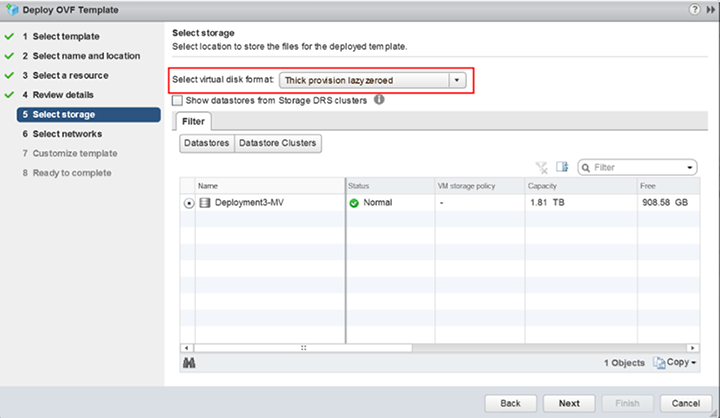

- Select the storage location to store the files for the deployment template.

Figure 8. Select Storage

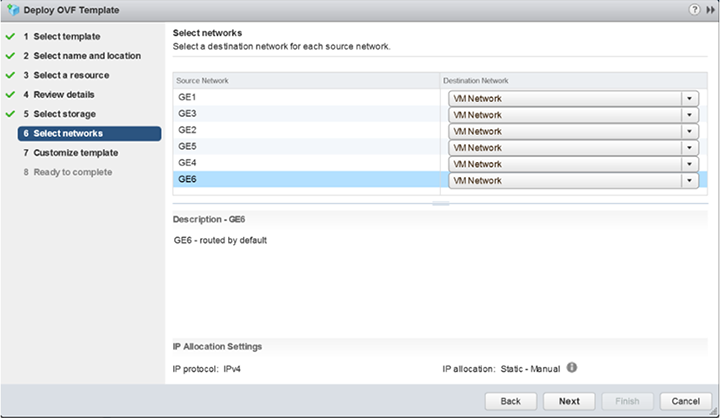

- Configure the networks for each of the interfaces.

Note: Skip this step if you are using a cloud-init file to provision the Virtual Edge on ESXi.

Figure 9. Select Networks

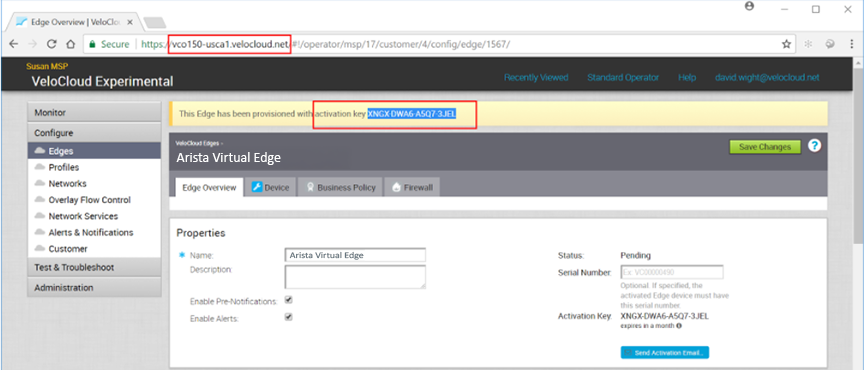

- Customize the template by specifying the deployment properties. The following image highlights:

- From the Orchestrator UI, retrieve the URL/IP Address. You will need this address for Step c below.

- Create a new Virtual Edge for the Enterprise. Once the Edge is created, copy the Activation Key. You will need the Activation Key for Step c below.

Figure 10. Create Virtual Edge

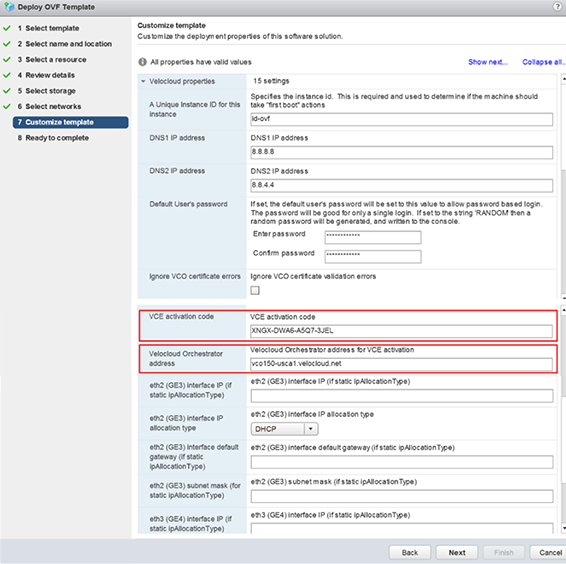

- On the customize template page shown in the image below, type in the Activation Code that you retrieved in Step b above, and the Orchestrator URL/IP Address retrieved in Step a above, into the corresponding fields.

Figure 11. Customize Template

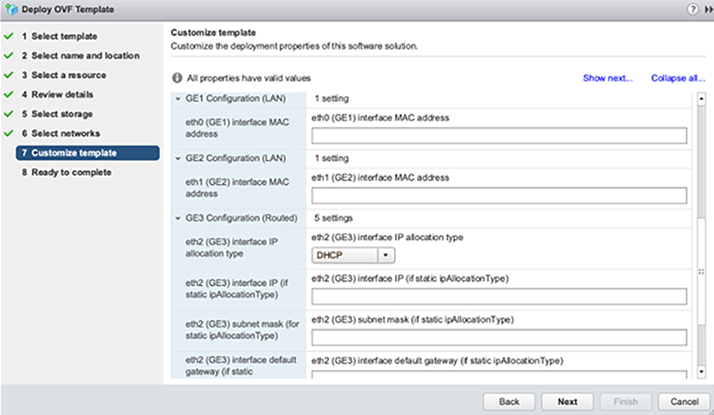

Figure 12. Customize Template - Edit Interface

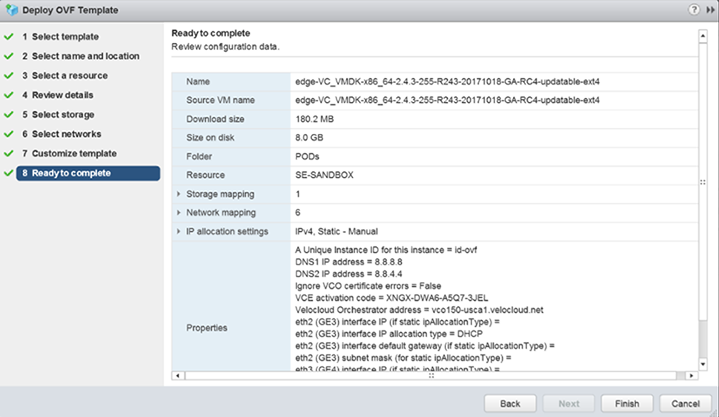

- Review the configuration data.

Figure 13. Deployment Completion

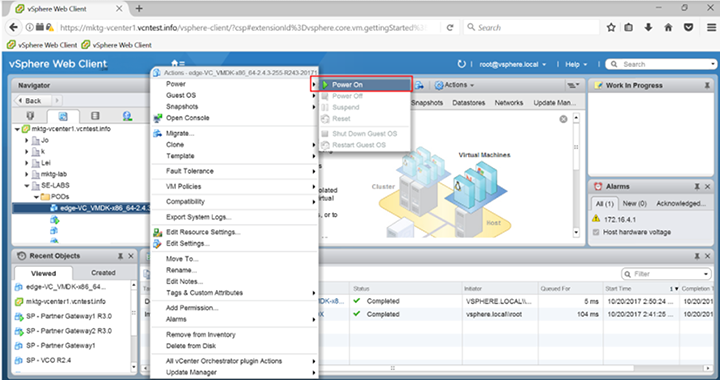

- Power on the Virtual Edge.

Figure 14. Virtual Edge Installation

Once the Edge powers up, it establishes connectivity to the Orchestrator.