White Paper

Broadcast Transition from SDI to Ethernet

Table of Contents

– Migration to Ethernet

– Software Defined Networking

– Solving Broadcast Requirements

– Time Synchronization Protocols

– Extensible API (eAPI)

– EOS Software Development Kit

– Multicast Features

– DirectFlow

– OpenFlow

– Seamless Frame Switching

– Network Timed Video Switching

– Destination Video Switching

– Source Timed Video Switching

– Audio Video Bridging (AVB)

– Broadcast Benefits of Ethernet

– Conclusion

Traditional broadcast Infrastructure built with SDI Baseband Routers, coaxial cables and BNC connectors is transitioning to Ethernet using off-the-shelf network switches and SDN control. The increasing speeds supported by Ethernet Switches and the corresponding improvement in aggregate non-blocking switch and network throughput has paved the way for the use of IP/Ethernet to transport critical broadcast applications in a cost-effective manner, while delivering the same robustness and stability of legacy SDI and MADI router operations – all with greatly increased agility and flexibility to meet ever evolving media formats.

Until recently, local facility-based TV broadcast signal routing has been accomplished using baseband switching matrices which connect a specific input to a specific output through manual operator selections or via automation. The router was originally analog. Analog migrated to digital when NTSC was encoded using SDI (Serial Digital Interface) at 270Mbps. At that time, a router matrix of 256x256 was regarded as large. Starting around the year 2000, the need for 1.5Gbps HD-SDI routing surfaced to support digital television ATSC HD OTA (over- the-air) transmission in North America. This drove the need for router matrices to scale up to 1152x1152 to serve the considerably larger new HD-capable network facilities being built around the world, many devoted to professional sports. More recently the television industry agreed that 1080p50/60 should replace 1080 interlaced in some acquisition, production and contribution environments, requiring 1.5Gbps HD-SDI to be doubled to the 3Gbps standard of 3G-SDI. Broadcast facilities now require 75-ohm coaxial cable to carry 3Gbps, or 10x more bits than original SDI at 270Mbps, making the internal switching matrix designs very expensive to manufacture. The television industry needs new advanced technology to meet these performance requirements while maintaining the deterministic reliability of the current infrastructure.

The professional television industry has embraced IP technology in many areas, including video point-to- point transport and wireless HD-ENG backhaul. But TV-specific professional coaxial-based streaming formats (SDI, HD-SDI, 3G-SDI), requiring TV-specific digital routing systems, were considered to require dedicated point-to-point technologies and commercial IP-based data center networks were not implemented in the broadcast plant. While the TV broadcast industry was busy going from 270Mbps coax to 3Gbps coax, the high speed data center switch market was busy riding Moore’s law in creating and deploying 10/25/40/50/100Gbps, cost-effective IP networks and related appliances.

The television industry is currently facing the need for tremendous amounts of content distribution as well as supporting new 4K-UHD formats that require the switching of uncompressed video at bitrates of 12Gbps and above. It is simply impractical, or even impossible, to accomplish this with traditional broadcast SDI router designs. Broadcasters have begun to embrace commercial off the shelf (COTS) data center class IP networking to enhance the efficiency of the broadcast plant. More importantly, the flexibility provided by packet-based architectures effectively supports the new reality of agile production and multi-faceted distribution strategies, with the added benefit of Ethernet economics instead of high- cost bespoke broadcast infrastructure. This transition from legacy broadcast systems to IP/Ethernet meets both the near and long term demands of production, contribution, distribution and content delivery.

Migration to an Ethernet/IP Infrastructure

Television companies put significant effort into making their network operation centers, master control and transmission facilities optimized for LIVE. Sophisticated mechanisms are developed to transmit signals in real-time without buffering, with redundancy schemes including hitless protection switching (without losing frame sync), and establishing full management software control over routers and infrastructure equipment. This allows the television engineer to maintain and operate IT- specific components in addition to the broadcast-specific equipment.Increasing production performance and cost requirements can no longer be served by traditional switching and distribution, instead requiring a new architecture that leverages the cloud networking approach used in today’s high performance data centers. This will result in benefits such as:

- The television industry will gain from recent and ongoing advances in the very large Enterprise IT industry, such as high performance networks, low latency and jitter, mechanisms for time synchronization, the ability to support multicast at large scale and comprehensive network control via API’s.

- Implementing an IP “Data Center” in the broadcast plant will instantly increase switching capacity and reduce facility space requirements, and capacity will continue to grow as Moore’s law results in ongoing price/performance gains for network switches based on merchant silicon.

- The various digital broadcast standards such as SDI, HD-SDI, 3G-SDI, 4K/UHD, 24/32bit Audio in stereo, 5.1, 9.1, 22.2 can coexist in IP formats and be switched within the same IP routed network, eliminating the need to build and operate separate parallel networks.

The transition from SDI to Ethernet/IP infrastructure in professional television will take several years, but it is anticipated that all major television facilities requiring new and larger switching capabilities will select the commercial off the shelf (COTS) IP “Data Center” networking approach. However, legacy SDI Routers and new Ethernet/IP networks will coexist for a number of years during the migration to a COTS switch infrastructure. During this transition, a smooth operational integration between the old and the new must be accomplished in a way to avoid burdening the production teams. The production staff should not see any material difference in operations whether a particular project’s signals are SDI-based or Ethernet/IP- centric, assuring the ability to efficiently process and seamlessly route old SDI video streams as well as streams across the new infrastructure.

Software Defined Networking (SDN)

Software Defined Networking (SDN) works in conjunction with Network Management software to configure the underlying network, giving broadcast professionals the opportunity to, (a), define the network’s operational parameters, and, (b), to easily configure any special processing and temporary routing with a “push of a button or click of the mouse”. Multiple types of productions can be added to Software Controlled environments. Once everything is virtualized and orchestrated by a Studio Control System, the TV Studio/ Facility can be operated very efficiently.Automatic provisioning of the studio IP network and edge can be accomplished in multiple ways. For example, the Studio Control System can push out a new Software Defined Networking (SDN) configuration to the switches as a method to prepare the network for the desired workflow and video routing. Alternatively, the Ethernet switch can learn from underlying control protocol to dynamically create the required end-to-end bandwidth reservations, QoS settings and programming of the stream multicast address. Remote Production cost savings is also enhanced. The IP connection from the facility infrastructure to a remote studio, with intelligent integration of control and orchestration, makes it possible to save time, personnel and travel costs, and can ultimately deliver a higher quality program through the use of tools available from a broadcaster’s primary larger-scale facility.

The use of network automation has become commonplace in deploying services within the walls of a typical data center. These same automation principals help reduce the complexity and reduce the time to deployment for broadcast services over Ethernet. The depth of automation in broadcast varies from off the shelf controllers, to custom scripting using API’s, to standards-based protocols that create the foundational broadcast requirements within Ethernet networks.

Solving Broadcast Requirements with Switch Features

Arista switches are used in many large broadcast facilities today. Each facility has different requirements, so it is critical to deploy a network design from a portfolio of switch platforms that can meet the needs of the various applications. Below are technologies that have already helped broadcast facilities make the transition to COTS switches.Time Synchronization Protocols

IEEE 1588-2008 Precision Time Protocol (PTP) enables a highly accurate timing solution with nanosecond accuracy. The protocol enables heterogeneous systems that include clocks of various inherent precision levels, resolutions, and stability to synchronize with a grandmaster clock. In isolated environments the Arista switch can provide a root timing reference by becoming the grandmaster for the network. Arista will also support the SMPTE 2059-2 profile. Alternatively, generalized Precision Time Protocol (gPTP) is also available on Arista switches to provide end-to-end clock synchronization for A/V network protocols such as Audio Video Bridging (AVB).eAPI

The Arista Extensible API (eAPI) scales across hundreds of switches and provides an open programmatic interface to switch configuration and status. eAPI integrates directly with Arista EOS SysDB and delivers a standardized way to administer, configure, and manage Arista switches, regardless of switch type or placement within the network. Automation can be built into the Studio Control System to leverage eAPI for seamless configuration changes to the Arista switches, which alleviates broadcast engineers from being experts on the network switch CLI.The Arista EOS eAPI is a simple and complete API that has proven to be an invaluable tool for building management plane applications, making it easy to develop solutions that interface with the device configuration and state information. Once the API is enabled, the switch accepts HTTP/HTTPS requests containing a list of industry standard CLI commands, and responds with machine-readable output and errors serialized in JSON. Hardening eAPI access can be accomplished through commonly used data center methods such as ACLs and AAA.

Building on the capabilities of eAPI, the Python Client for eAPI (pyeapi) is a language-specific client to make working with eAPI even easier. It is designed to assist network engineers, operators and devops teams to build eAPI applications faster without having to deal with the specifics of the eAPI implementation. It provides an easy to use Python library for building robust management plane applications.

EOS SDK

With EOS Software Development Kit (SDK), customers can develop their own customized EOS applications in C++ or Python applications that are first-class citizens of EOS along with other EOS agents. The SDK provides programming language bindings to software abstractions available in Arista EOS, so 3rd Party agents can access switch state and react to network events. These applications can, for example, manage interfaces, IP and MPLS routes, Access Control Lists (ACLs), as well as use a range of APIs to communicate between the switch and monitoring or network controllers.These apps, or “agents,” harness the full power of EOS, including event-driven, asynchronous behavior, high availability, and complete access to both Linux and EOS’s APIs with the ability to react to events and programme data plane forwarding in a native and high performance manner as existing agents within Arista EOS.

Comprehensive Multicast Features Support

Arista switches provide layer 2 multicast filtering and layer 3 routing features for applications requiring IP multicast services which provides scaleable fan-out of delivery of traffic. The switches support over a thousand separate routed multicast sessions at wire speed without compromising other Layer 2/3 switching features. Arista switches provide standards-based multicast support for IGMP, IGMP snooping, PIM-SM, and MSDP to simplify and scale multicast deployments.DirectFlow

DirectFlow is OpenFlow programing of the Arista switch where an external controller is not required. The same match and action capabilities that are available in OpenFlow are available via DirectFlow. In the simplest form of DirectFlow, programing of the data-plane is performed directly via the CLI by setting the match and action requirements for the flow. More advanced uses of DirectFlow are performed through the use of scripts and/or 3rd party broadcast devices leveraging Arista’s eAPI capabilities for rapid manipulation of the flows passing through the switch.OpenFlow

OpenFlow is an open standard protocol that provides access to the forwarding plane of a network switch/router to a centralized controller for traffic management and engineering. An OpenFlow switch separates the data path and the control path, with the control path abstracted to a separate open source or commercial controller. The OpenFlow switch and controller communicate via the OpenFlow protocol, which allows the network infrastructure to override normal data plane forwarding on specific flow(s) and apply a new forwarding decision for the specified application flow. Defining the specific application flow that requires a new data plane forwarding decision is accomplished through programming the network device with a “match” field. The traffic is then steered through the OpenFlow enabled network device by the use of “actions” for the flow entry. Within a Broadcast facility the use of OpenFlow establishes a deterministic path for application traffic across the network.Broadcast Solutions with Arista

Seamless Frame-Accurate Switching

A Broadcast facility requires seamless “LIVE” switching between two sources, as classic SDI Routers have been doing for years. However the classic SDI Router approach of switching synchronous streams in a very small real- time window at the RP-168 switch point, known as “network-timed switching” is not easily duplicated in an IP Network as each port is carrying multiple signals (i.e., a 10GbE port may carry six (6) uncompressed HD 1.5Gbps signals) and COTS Ethernet switching gear is not designed to enact realtime control-plane decisions with such precision.Network Timed Video Switching

Network Timed Switching leverages the COTS Ethernet FPGA to switch between video streams. Incoming video streams are fed into the switch FPGA and are buffered. For each incoming video stream, the buffer stores both the current and previous frame. When a video switch command arrives, the outgoing video stream will complete the transmission of the last frame of the first video stream and then begin transmitting the new stream at the proper RP-168 switch point. With an FPGA implementation, there is no additional delay or jitter at the frame switching boundary, ensuring a smooth transition. This allows for seamless switching between any number of input streams to one output stream.Destination Timed Video Switching

Destination Timed Switching is the easiest switched video method to implement on COTS Ethernet switches. This implementation requires the destination edge device to temporarily subscribe to two multicast streams, during which twice the bandwidth of a single video signal is consumed to the destination. The receiver handles switching to the new stream at the correct point in the video raster, and then drops the original stream.Sourced Timed Video Switching

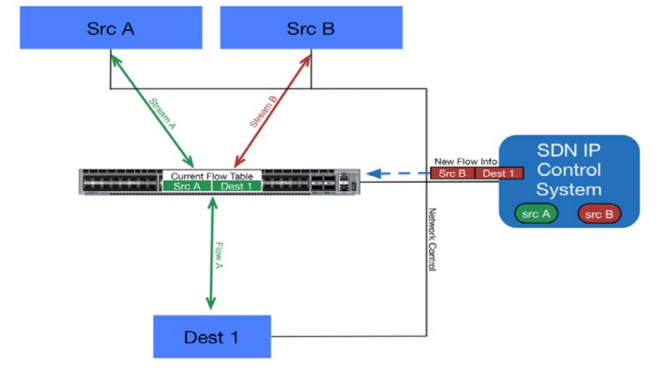

Source Timed Switching can be done precisely with frame accuracy without consuming double bandwidth as required by Destination Timed Switching. When a video switch is commanded, new IP flow rules are setup on the Ethernet switch shortly ahead of the video switching time. At the precise SMPTE RP 168 switch point the video sources change the source port in the UDP packet header to match the new flow rules on the Ethernet switch. Source Timed Switching is independent of any IP video bitrate from SDI to 4K-UHD/DCI.For example, each source edge device “Src A” and “Src B” is capable of sending UDP multicast media streams. The Arista switch is configured with an L4 UDP-port-specific flow. The SDN IP and Studio Control System sets up the internal IP flow tables in the Ethernet switch so that multicast stream A is delivered to the “Dest 1” edge device.

All edge devices and the control system are synchronized using the Precision Time Protocol (PTP). When a clean switch from Src A to Src B is triggered via a button or command sequence, the control system first prepares a new flow table with a flow rule to steer Stream B to the destination device. This multicast stream will then utilize another UDP port.

The control system then informs the destination edge device about the upcoming video switch. Subsequently, the edge devices handling Source A and Source B are told to switch at the next video frame boundary following a specific PTP value. The Source edge devices then change the source UDP port address coming from both sources at the same synchronized time. As both flow rules in the switch have already been set up before this time, the destination edge device continues to seamlessly receive a single Real-Time Transport Protocol (RTP) multicast stream, but now coming from Source B instead of Source A. Thus, this clean IPbased video switch is essentially identical to an SDI Router clean switch.

The Studio Control System would recognize the precise time when a clean switch is needed between the sources. The broadcast device would leverage the Arista eAPI to write the DirectFlow configuration directly to the switch. DirectFlow and eAPI eliminates the need for OpenFlow support on the Studio Control System platforms, since the flow logic can be automated from the Studio Control System directly to the Arista switch.

Audio Video Bridging (AVB)

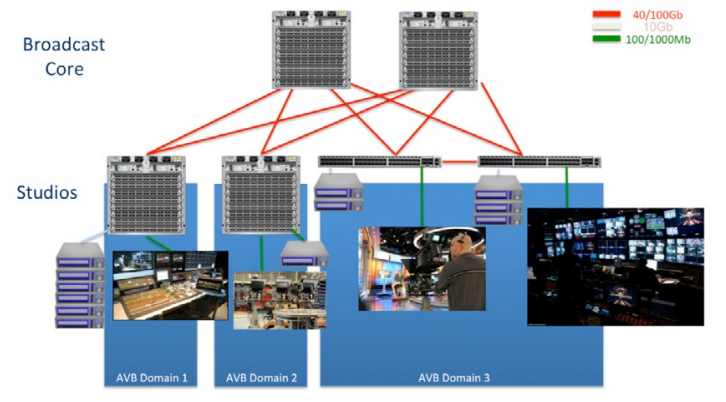

AVB is comprised of a set of IEEE standards that prepares the network to ensure guaranteed signal performance of time sensitive workloads such as uncompressed audio, video and control-signal data all running over an Ethernet network. AVB provides a plug and play solution to reduce the huge administrative overhead of configuring each element of the network for A/V requirements.While AVB is used in many industries, a common use case for AVB is within the walls of Broadcast studios. Equipment such as A/V mixers, storage and audio consoles all need to receive uncompressed audio and video streams without interruption of the control signals as they transverse the Broadcast infrastructure. Without AVB, all these devices are connected using A/V routers that have point-to-point connections to each and every endpoint for each feed, while an endpoint connected to an AVB-enabled Ethernet interface can provide one-to-one, one-to-many and many-to-one communication.

The AVB protocols provide the Ethernet network with end-to-end bandwidth allocation, time synchronization, traffic shaping and predictable low latency. These concepts are necessary to provide reliable transport of critical broadcast content. The concepts of traffic shaping, bandwidth allocation and time synchronization over a low latency infrastructure is nothing new to COTS network equipment.

Stream Reservation Protocol (SRP) checks the available network bandwidth, from source to destination, for each stream prior to allowing a stream to become established across the Ethernet network. In conjunction with SRP, hardened networking Quality of Service (QoS) mechanisms are implemented to prioritize and forward A/V streams. Together, SRP and QoS provide a solid network foundation to ensure AVB stream data packets can reach their destinations without being impacted by “normal” IP traffic.

Time synchronization of reference clocks on the AVB capable devices is accomplished through generalized Precision Time Protocol (gPTP). Endpoints and network switches must participate in gPTP to allow for the presentation time of A/V streams to arrive at the same relative time on the AVB endpoints, regardless of how much distance exists between the source and multiple destinations.

Static configuration of AVB streams can be applied to the end points that don’t require frequent changes to the stream requirements. More commonly used are third party central AVB controllers for automatic discovery and enumeration control of the end points. Once the AVB capable end points are discovered in the AVB domain, the controller software provides the production team a cross point view of the AVB endpoints such that with a “click of the mouse” streams can be dynamically set-up and torn down.

Current port-to-port latency of COTS Ethernet switches is measured in nanoseconds or microseconds. Delay- sensitive digital A/V traffic requires a low latency, low jitter network along the entire network path between the AVB endpoints.

The Arista portfolio of switches allows multiple audio and video streams to be carried over a single Ethernet cable with low latency and low jitter. A single Arista switch can provide up to 1152 AVB interfaces with speeds ranging from 100Mb to 100Gb per interface.

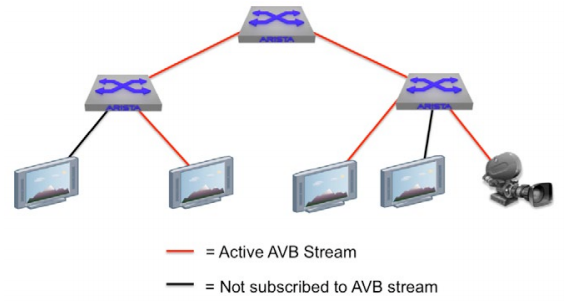

In this example, the AVB Talker (camera) is sending streams through the AVB domain while the AVB Listeners (monitors with red links) subscribe to the Talker’s stream. Not every AVB device in the infrastructure needs to subscribe to a stream (as seen by the Listeners with black links), but all of the devices could subscribe to the stream if the network and endpoints were AVB capable.

In this example, the AVB Talker (camera) is sending streams through the AVB domain while the AVB Listeners (monitors with red links) subscribe to the Talker’s stream. Not every AVB device in the infrastructure needs to subscribe to a stream (as seen by the Listeners with black links), but all of the devices could subscribe to the stream if the network and endpoints were AVB capable. The underlying AVB protocols determine the end- to-end network and host capabilities for sending/receiving the A/V stream. During this process the switches ensure that only the devices that require the stream will receive the stream. Since AVB is enabled on an interface-by-interface basis, non-AVB devices can coexist on the switches in the AVB domain without hesitation.

Broadcast Benefits from Ethernet/IP Migration

-

Future Proof

– Enterprise-level IP Networking is much more scalable in terms of ports, bandwidth and geographical reach, vastly exceeding any traditional SDI router architectures. -

Flexible and Responsive

– Software Defined Networking (SDN) orchestration and control provides exceptional flexibility and speed to (re) configuring the IP infrastructure for any special workflow and delivery requirements, whether temporary or longer term. -

Legacy SDI Routers are Not Compatible

– with new formats such as 4K. New television facilities and network operations centers requiring full 4K-UHD/ DCI capabilities greatly benefit from a Video-over-IP architecture that can support new and emerging formats without any hardware changes. -

CAPEX and OPEX Savings

– IP networks and switches with 10/25/40/50/100GbE capabilities can cost significantly less than comparable SDI router designs, due to the capacity of high-speed Ethernet and the very large market demand for these products, offering COTS (Commercially Off-The-Shelf) pricing. This delivers a much higher level of performance, capabilities, and much more flexibility than traditional SDI routers for a given investment in a medium-to-large sized television or broadcast facility. Today’s data center IP infrastructure also delivers the benefit of advanced telemetry and SDN performance monitoring, contributing to high reliability and significant operational cost savings. -

Large Savings in Equipment Footprint

– A traditional 1152x1152 HD-SDI router needs around 40RU while an Arista 1152x1152 (10GbE) IP 7508E switch only requires 11RU.

Arista 7504E and 7508E Network Switches

-

The Single “Port/Cable” Advantage

– A single port on an IP network can carry multiple media streams. A 10GbE port has the capacity to concurrently carry six (6) 1.5Gbps HD-SDI channels (6x 1.5 = 9Gbps) and is bi-directional, while a single port on a traditional HD-SDI router can only carry ONE, either as Input OR Output. A 40GbE or 50Gbe port could carry multiple uncompressed 4K-UHD channels. -

Future Cloud-Based Services

– Use of Cloud computing, now or in the future, requires IP connectivity, enabling the adding or removing of resources and capacity through Software Defined Network controllers.

Conclusion

The migration of the Broadcast world to IP infrastructure is under way as evidenced by early adoption in some very large and influential television facilities already using IP network architectures.The establishment of IP Video Routers and IP networks in television over the next several years requires substantial efforts by Broadcasters and broadcast equipment suppliers, similar to the efforts we saw in the transformation from analog to digital television that took over a decade to be fully realized. This new paradigm shift merges the disciplines of Broadcast and IT, which requires cross-functional skills and knowledge that is sure to keep the industry busy for years to come.

The move away from proprietary and bespoke broadcast technology will eventually change the approach for many types of productions, creating new workflows that are difficult to envision today. Arista has platforms today that will provide better performing, more flexible and cost-effective broadcast services over an IP network, while also providing the foundation for the next generation of Ethernet/IP products needed for the Broadcast facility.

Arista is an active participant in IEEE and SMPTE standards to help further advance Ethernet/IP switch requirements needed for the Media and Entertainment industry.

Copyright © 2016 Arista Networks, Inc. All rights reserved. CloudVision, and EOS are registered trademarks and Arista Networks is a trademark of Arista Networks, Inc. All other company names are trademarks of their respective holders. Information in this document is subject to change without notice. Certain features may not yet be available. Arista Networks, Inc. assumes no responsibility for any errors that may appear in this document. 02-0023-01